Azure DNS Private Resolver: The End of Custom DNS VMs in Your Hub

In this article

- The Hybrid DNS Problem

- Why the Forwarder VMs Were a Liability

- What DNS Private Resolver Does

- Inbound Endpoints: On-Premises Resolving Azure

- Outbound Endpoints: Azure Resolving On-Premises

- Architecture Patterns

- A Note on Regional Resiliency

- The Migration: Replacing Your DNS VMs

- The DNS Resolution Chain with Private Endpoints

- Cost

- Time to Retire the VMs

2026 update: This article was written when DNS Private Resolver reached GA in late 2022. The architecture patterns and migration guidance remain valid. The service has since matured and is now the standard approach for hybrid DNS in Azure. Regional resiliency note added below. For the broader private endpoint DNS story and common DNS failures we see in audits, see Azure Private Link and What a Landing Zone Audit Finds.

Before Azure DNS Private Resolver reached GA, every enterprise hub-spoke architecture in Azure had DNS forwarder VMs sitting in the hub. Two Windows Server VMs running the DNS role. Or a pair of Linux boxes running BIND or CoreDNS. They forwarded queries between on-premises Active Directory DNS and Azure Private DNS zones. They were always there, and they were always a liability.

DNS Private Resolver is a fully managed service that replaces those VMs. After migrating several hybrid environments to it, the recommendation is clear: if you still have DNS forwarder VMs in your hub, plan their retirement.

The Hybrid DNS Problem

The root issue is that on-premises and Azure use different DNS systems, and they need to talk to each other.

On-premises, you have Active Directory DNS. Your domain controllers serve corp.contoso.com and all the internal zones your organisation has accumulated over the years.

In Azure, you have Azure DNS - the built-in resolver at 168.63.129.16 that every VM uses by default. When you deploy Private Endpoints, the A records land in Azure Private DNS zones like privatelink.blob.core.windows.net. Azure DNS resolves those zones natively - as long as the Private DNS zone is linked to the VNet.

The problem: on-premises DNS doesn’t know about Azure Private DNS zones. Azure DNS doesn’t know about corp.contoso.com. Neither side can resolve the other’s records.

The traditional fix: deploy DNS forwarder VMs in the hub VNet. Configure them as conditional forwarders - queries for corp.contoso.com go to on-premises domain controllers over ExpressRoute or VPN, and queries for Azure Private DNS zones go to 168.63.129.16. Then set every VNet’s DNS server setting to point at these forwarder VMs instead of the Azure default.

It works. But you’re running infrastructure that does nothing except forward DNS packets, and if it goes down, every VM in every spoke loses name resolution.

Azure docs: Private DNS zone overview · Private endpoint DNS configuration

Why the Forwarder VMs Were a Liability

We’ve seen the same failure modes across every client running this pattern:

Single point of failure. Even with two VMs behind a load balancer, DNS failover isn’t instant. We’ve seen 30-60 second resolution gaps during failover - long enough for health probes to fail and applications to throw errors.

Patching risk. Every resource in every spoke is one reboot away from losing name resolution. You stagger the patching, you test the failover, and you still hold your breath.

No auto-scaling. DNS query volume scales with your Azure footprint. Nobody sizes DNS VMs for peak load. They get sized once and forgotten.

Monitoring gaps. Most teams monitor whether the VM is running. Few monitor whether DNS resolution is actually working. The VM can be healthy while the DNS service is hung or the conditional forwarder target is unreachable.

What DNS Private Resolver Does

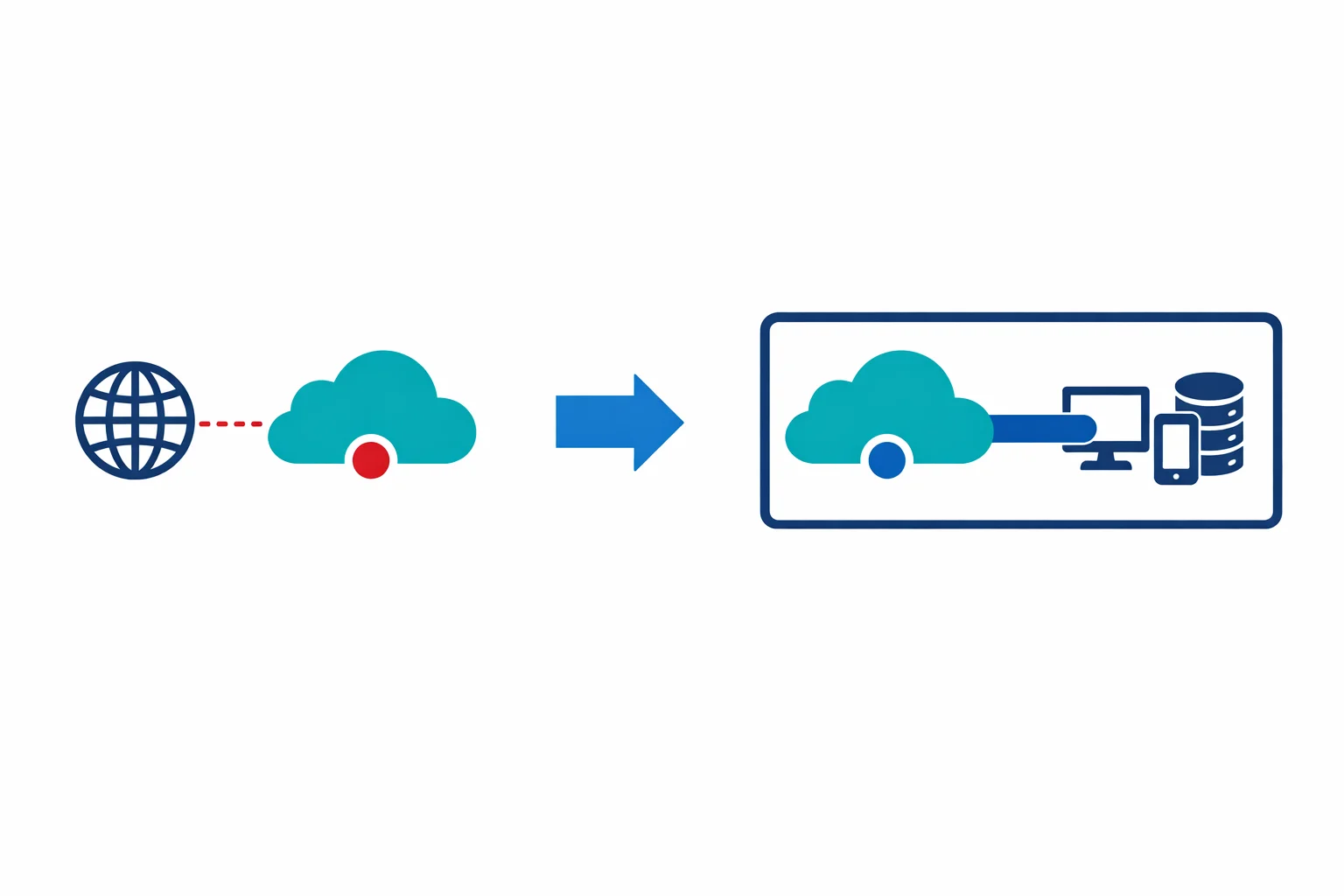

DNS Private Resolver is a managed service that you deploy into a VNet. It has two types of endpoints:

Inbound endpoints receive DNS queries from outside Azure - typically from on-premises networks. You get a private IP address in a dedicated subnet, and on-premises DNS servers forward queries to that IP. The resolver handles resolution against Azure Private DNS zones linked to the VNet.

Outbound endpoints send DNS queries from Azure to external resolvers - typically on-premises DNS servers. You attach DNS forwarding rulesets to the outbound endpoint, defining which domains get forwarded where.

The combination replaces both directions of the conditional forwarding chain that the forwarder VMs provided.

On-premises DNS Azure DNS Private Resolver

┌──────────────────┐ ┌──────────────────────────┐

│ corp.contoso.com │◄── outbound ──────│ Outbound endpoint │

│ (AD DNS) │ forwarding │ Ruleset: │

│ │ │ corp.contoso.com → DC │

│ │ │ │

│ Conditional fwd: │── inbound ───────►│ Inbound endpoint │

│ privatelink.* → │ queries │ Resolves via Azure DNS │

│ resolver IP │ │ + Private DNS zones │

└──────────────────┘ └──────────────────────────┘Azure docs: DNS Private Resolver overview · Inbound endpoints

Inbound Endpoints: On-Premises Resolving Azure

When on-premises clients need to resolve Azure Private DNS zones - which they absolutely do once you’re using Private Endpoints - the inbound endpoint is the target.

You create an inbound endpoint in a dedicated subnet (minimum /28, maximum /24 in current service constraints). The subnet must be delegated to Microsoft.Network/dnsResolvers. The resolver gets a private IP in that subnet, say 10.0.5.4. On your on-premises DNS servers, you configure conditional forwarders:

privatelink.blob.core.windows.net → 10.0.5.4

privatelink.database.windows.net → 10.0.5.4

privatelink.vaultcore.azure.net → 10.0.5.4

privatelink.azurewebsites.net → 10.0.5.4When an on-premises client queries mystorageaccount.blob.core.windows.net, the on-premises DNS server follows the CNAME to mystorageaccount.privatelink.blob.core.windows.net, hits the conditional forwarder, sends the query to 10.0.5.4, and the resolver returns the private IP from the linked Private DNS zone. The exact same flow the forwarder VMs handled - except now it’s a managed service with built-in HA across availability zones.

Outbound Endpoints: Azure Resolving On-Premises

Outbound endpoints handle the other direction. Azure VMs that need to resolve on-premises domains get their queries forwarded to on-premises DNS servers.

You create an outbound endpoint in another dedicated subnet (same /28 minimum, /24 maximum, delegated to Microsoft.Network/dnsResolvers). Then you create a DNS forwarding ruleset and attach it to the outbound endpoint. The ruleset contains rules like:

Domain: corp.contoso.com

Target DNS servers: 10.100.1.10, 10.100.1.11 (on-premises DCs)

Domain: legacy.internal

Target DNS servers: 10.100.2.5The ruleset can be linked to multiple VNets, so one ruleset can serve your entire hub-spoke topology. When a VM in a linked VNet queries server1.corp.contoso.com, the resolver forwards it through the outbound endpoint to the on-premises domain controllers. Everything else falls through to Azure DNS as normal.

One important warning: if a forwarding ruleset contains a rule that points to the resolver’s own inbound endpoint, do not link that ruleset to the same VNet where the inbound endpoint is deployed. This creates a DNS resolution loop where the outbound endpoint forwards to the inbound endpoint, which resolves via Azure DNS, which hits the ruleset again. Microsoft documents this as a known misconfiguration to avoid.

Azure docs: Outbound endpoints and rulesets · DNS forwarding rulesets

Architecture Patterns

Single-region hub-spoke. Deploy one resolver in the hub VNet with one inbound and one outbound endpoint. Link the forwarding ruleset to all spoke VNets. On-premises conditional forwarders point at the inbound endpoint IP. In our experience, this covers the majority of deployments.

There are two main design approaches for how spoke VNets consume the resolver. The distributed approach (described in the migration section below) uses Azure default DNS (168.63.129.16) on spoke VNets and links the forwarding ruleset to each spoke for outbound forwarding. The centralized approach sets the resolver’s inbound endpoint IP as the custom DNS server on each spoke VNet, routing all DNS through the resolver. Both are valid. The distributed approach is simpler for most hub-spoke topologies. The centralized approach gives you more control over query routing but requires the inbound endpoint to handle all DNS traffic from all linked VNets.

Multi-region. Deploy a resolver in each regional hub. Each gets its own inbound endpoint IP. On-premises DNS servers need conditional forwarders to both regional IPs, with the local region preferred. Private DNS zones must be linked to all VNets in all regions.

Multi-hub with Azure Virtual WAN. The resolver deploys into a spoke VNet connected to the vWAN hub, not into the vWAN hub itself (you can’t deploy resources directly into vWAN hubs). Route DNS traffic to the resolver spoke through the vWAN routing tables.

A Note on Regional Resiliency

DNS Private Resolver provides built-in zone redundancy within a single region, so it survives availability zone failures. But zone redundancy is not the same as cross-region disaster recovery. If the resolver’s region goes down entirely, DNS resolution through that resolver goes down with it. On-premises conditional forwarders pointing at that resolver’s inbound IP will fail.

For enterprises that need cross-region DNS resilience, deploy resolver instances in multiple regions (as described in the multi-region pattern above) and configure on-premises DNS servers with conditional forwarders to both regional inbound IPs. This way, if one region fails, DNS queries fall through to the resolver in the surviving region.

The Migration: Replacing Your DNS VMs

The migration path isn’t complicated, but it needs to be sequenced carefully. DNS failures are total failures - nothing works when name resolution breaks.

- Deploy the resolver alongside your existing forwarder VMs. Create both endpoints and configure the forwarding ruleset with your on-premises domains

- Test from a single spoke. Change one non-production spoke’s VNet DNS settings to Azure default (

168.63.129.16) and link the forwarding ruleset to that VNet. Validate on-premises resolution works - Update on-premises conditional forwarders. Add the resolver’s inbound endpoint IP as a secondary target alongside your existing forwarder VM IPs

- Migrate spokes incrementally. Move spoke VNets one at a time - update VNet DNS settings and link the forwarding ruleset

- Cut over on-premises. Update conditional forwarders to use only the resolver’s inbound IP

- Decommission the VMs. Wait a week. Confirm everything is stable. Shut them down

The critical detail: when VNets use Azure default DNS (168.63.129.16), Azure DNS resolves linked Private DNS zones natively. The outbound endpoint is only for forwarding to external DNS servers like on-premises DCs.

The DNS Resolution Chain with Private Endpoints

After migration, the full resolution chain for a Private Endpoint looks like this:

From Azure VMs:

VM queries mystorageaccount.blob.core.windows.net

→ Azure DNS (168.63.129.16)

→ CNAME to mystorageaccount.privatelink.blob.core.windows.net

→ Private DNS zone (linked to VNet) returns 10.1.2.5

→ VM connects to private IPFrom on-premises:

Client queries mystorageaccount.blob.core.windows.net

→ On-premises DNS

→ CNAME to mystorageaccount.privatelink.blob.core.windows.net

→ Conditional forwarder sends to DNS Private Resolver inbound IP (10.0.5.4)

→ Resolver queries Azure DNS + linked Private DNS zone

→ Returns 10.1.2.5 to on-premises client

→ Client connects via ExpressRoute/VPN to private IPThe resolver sits in the exact same position the forwarder VMs did. The difference is you don’t manage the infrastructure underneath it.

Cost

The resolver isn’t free, and the pricing catches some teams off guard. As of the original publication, each inbound or outbound endpoint cost roughly $180/month (check the current pricing page for your region, as prices vary). A typical deployment with one inbound and one outbound endpoint runs in the $300-400/month range. DNS queries add a small per-query cost on top, negligible for most workloads.

Compare that to two DNS forwarder VMs for HA: small VMs at $50-80/month each, plus OS licensing, plus monitoring, plus the operational cost of patching and troubleshooting. The resolver is more expensive in pure compute terms, but cheaper when you account for the operational burden you’re eliminating. For multi-region deployments, multiply by the number of regions.

Azure docs: DNS Private Resolver pricing

Time to Retire the VMs

If you’re running a hybrid Azure environment with Private Endpoints and on-premises Active Directory DNS, this is a no-brainer upgrade. The forwarder VMs were always a workaround for a gap in Azure’s DNS capabilities. That gap is now closed.

The VMs were a liability. Every patching cycle, every failover event, every time someone changed a conditional forwarder on one VM but not the other - those were incidents waiting to happen. A managed service with built-in zone redundancy and no OS to maintain eliminates an entire class of operational risk.

If you haven’t migrated yet, the process is straightforward. Deploy the resolver alongside your existing VMs, test one spoke, roll forward, and decommission. The whole process takes a week if you’re cautious, a day if you’re not. By 2026, most enterprises we work with have already made this move. If yours hasn’t, it’s one of the easier operational wins available.

Related: Azure Private Link: How It Changed the Enterprise PaaS Playbook private endpoint architecture at scale · Azure Landing Zones: What I Wish I Had Known hub-spoke deployment lessons · Azure Firewall: When Cloud-Native Network Security Makes Sense network security in the hub

Looking for Azure architecture guidance?

We design and build Azure foundations that scale - landing zones, networking, identity, and governance tailored to your organisation.

More from the blog

Azure Firewall in 2026 and When Standard, Premium, or an NVA Is the Right Call

APIM vs Azure Front Door vs Application Gateway and When to Use Each